mco 427 – blog post #2

We live in an immensely digital age where misinformation runs rampant. Technological advances enabling an abundance of information at our fingertips come at a cost– and unfortunately, amidst its prevalence, it becomes increasingly more and more difficult to differentiate factual information from the loads of exaggerated or blatantly incorrect content that swarms it and contributes to the blinding of our perceptions and the desensitization of our abilities to single out characteristics unique to misinformation.

Thankfully, there are several modern tools and services designed specifically to help test and determine the general Internet peruser’s ability to detect false information within the media– two of which I will be reviewing today.

Starting with the News Literacy Project’s RumorGuard service.

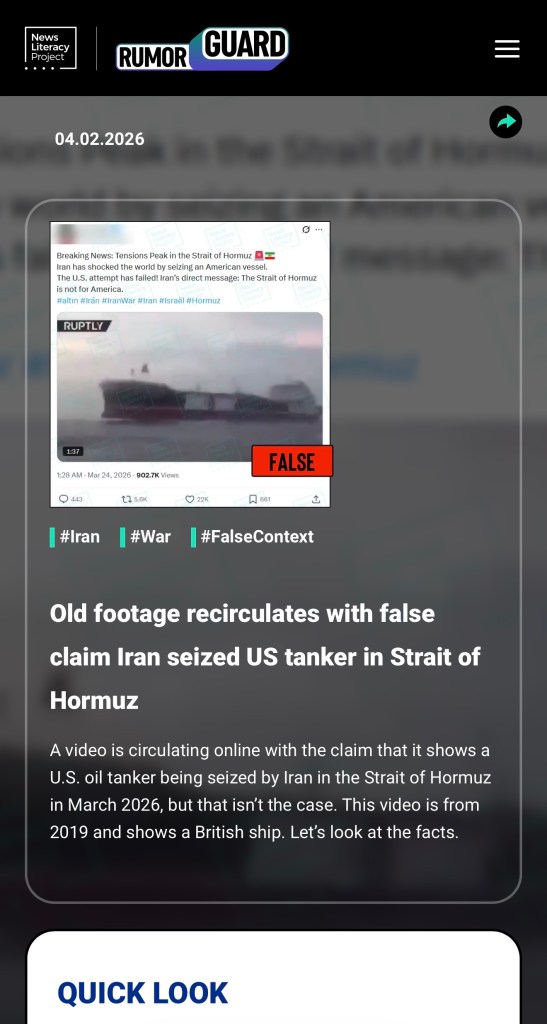

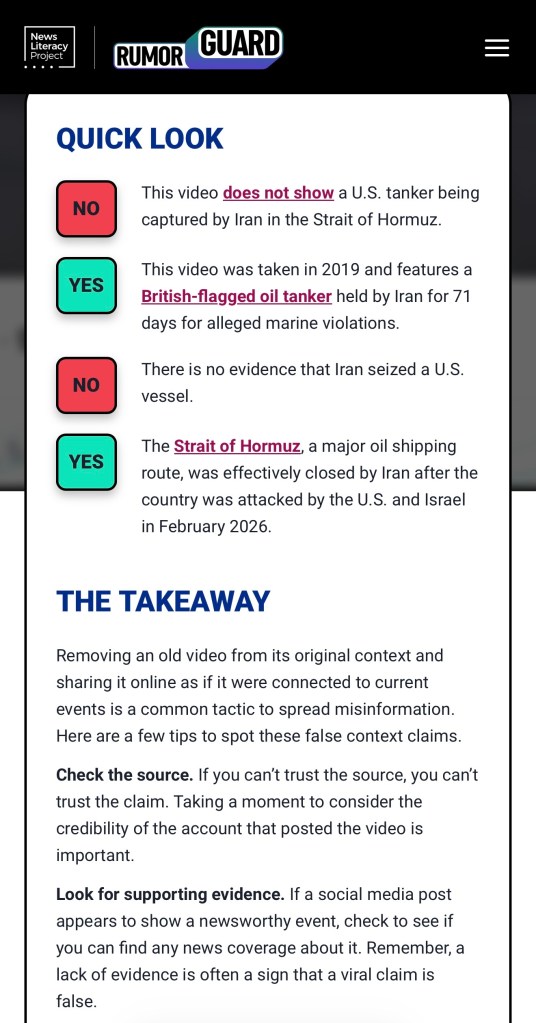

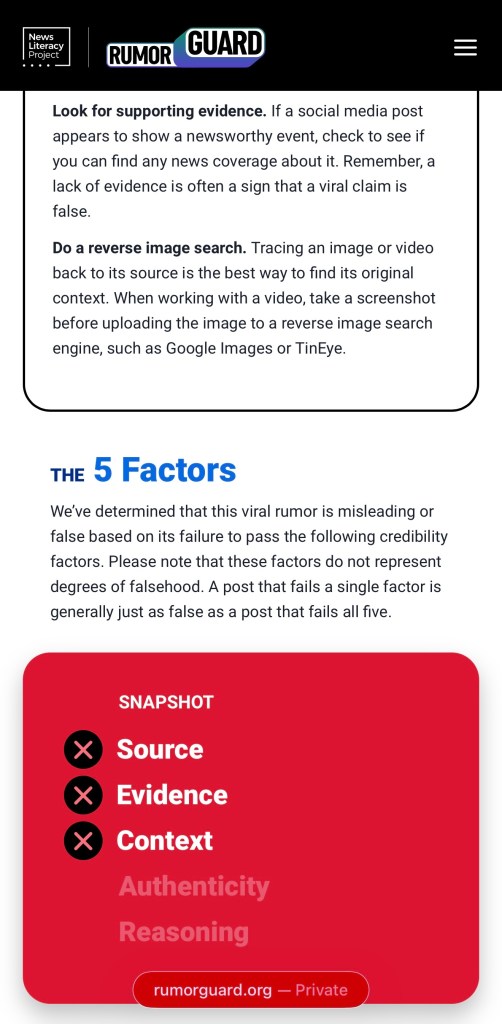

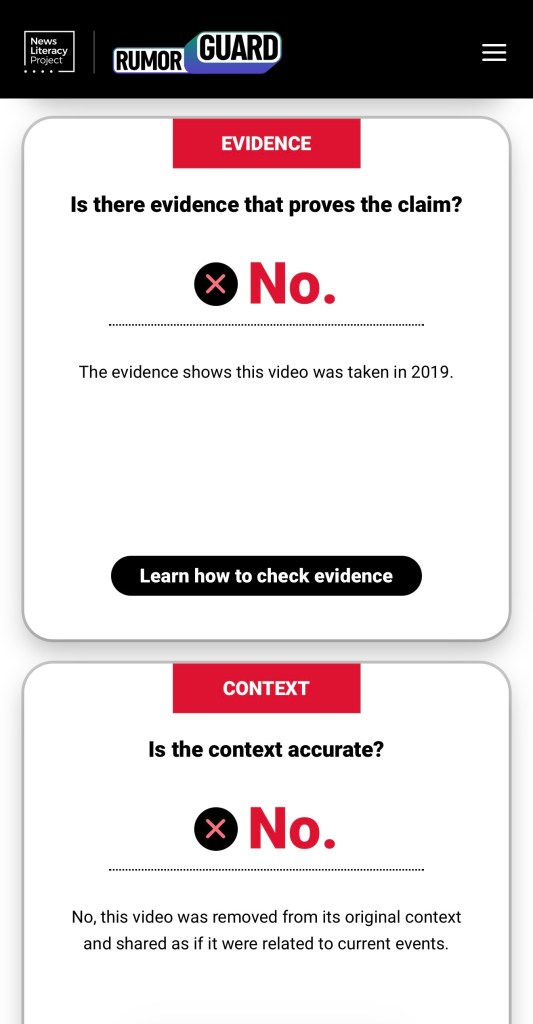

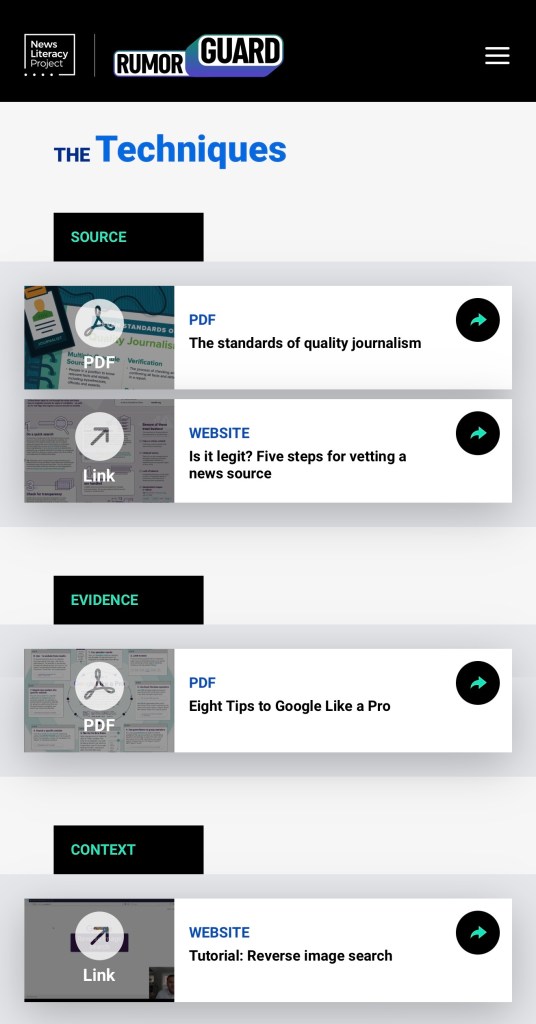

RumorGuard is unique because it depicts recent social media posts (such as tweets and reels) in real time, sorting each by hashtag category and directly proving or disproving the contents of said post. Upon selecting ‘learn more,’ you are provided with all the ways the textual contents of said post stand in direct contrast with the media material ‘portraying’ them, such as the fact that the photos or videos are from years prior or displaying a person, figure or location varying from that or those addressed in the descriptive claim. It informs you with the information the post got correct (if anything), the credibility factors taken into consideration to determine the rating it received, the techniques utilized to accurately vet a news source, and even helpful tidbits and takeaways to personally confirm or disprove other posts like it in the future.

For example, this report analyzes a ‘breaking news’ post made on March 24, 2026, claiming that Iran had overtaken a U.S. tanker in the Strait of Hormuz.

As we can see, right off the bat, the video attached to the post, which the source claims to represent the U.S. tanker seized in Strait of Hormuz, was concluded to be from 2019, and depicts a British ship. Technically, anyone could fact check this for themselves fairly easily by simply conducting a reverse image Google search of a screenshot from the video; sure enough, you would find it to depict a British-flagged oil tanker from 2019 that was, indeed, held by Iran for roughly 70 days– but not for the reasons the article wants you to assume.

While I like to think I am fairly competency in detecting misinformation amongst alluring news reports, I do not know nearly enough about war ships(?) to readily conclude that the ship in the video is anything other than the vessel alluded to in the article. And I can imagine sources like these bank on this; they most likely operate under the assumption that the average social media user will be shocked and disgruntled into sharing it, not knowing any better and simply trusting it to be true given the current state of global affairs.

Why might a source publish blatant misinformation, you might ask? It could be simply to garner clicks and attention. Social media pages are just as able to accrue a following with sensationalist clickbait and falsities, if not more so, than by sharing the truth. According to a 2018 MIT study, false information is roughly 70% more likely to be shared on social media than the truth; sometimes, content that can be argued and later proven fallacy generates even more conversation than that universally confirmed and known to be the truth. And this makes sense, when you think about it: what debate is there surrounding something objectively true?

In fact, the objective of the educational game Breaking Harmony Square is certainly representative of this conclusion; you are placed in the position of a ‘chief disinformation officer,’ where your success is determined by the degree of polarization and outrage garnered by the application of effectively dishonest, manipulative tactics (such as trolling, intentionally pulling on emotional heartstrings, ad hominem attacks, etc) to get people to react as opposed to thinking critically— because doing so hinders our judgment and therefore demonstrates an increased likelihood of attracting more attention, and hence more influence, than by merely sharing the truth.

Similarly, the game Bad News inspires you to gain as many followers as possible as quickly (and dishonestly) as possible; once again, toying with such deceptive tactics– so long as they traverse the fine line between appearing credible enough to be believed, but not too boring to lose followers– has an overwhelming tendency to ‘take the cake of virality,’ if you will.

For sake of this assignment, while I have reviewed both applications, I have decided to focus more on Bad News and what the game’s objectives say about the role of misinformation in curating an online presence— as well as what it teaches us on how to detect it in the real world.

Right off the bat, the platform starts out with a clear goal to, in its words, “guide me in my quest to becoming a disinformation and fake news tycoon.” Hey, what better way to understand how such processes work than to put ourselves in the very shoes of those indulging in such practices, right?

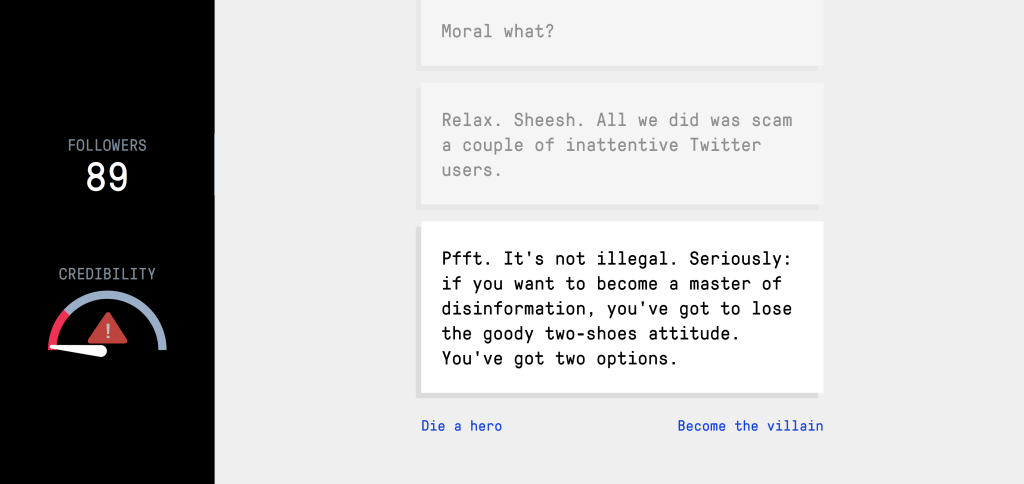

Even in signifying my ambivalence in expressing unhinged emotions via a “frustrated tweet,” and in spite of maintaining “moral objections” to engaging in such malpractices, I am grossly and continuously encouraged to a) publicly express controversial takes, b) impersonate ‘someone important,’ c) fake an official Twitter account and d) use said accounts to make absurd claims. While some of the options provided to me were fairly unbelievable, such as President Donald Trump announcing the renaming of Canada to North North Dakota (first of all: what? How would he even have the authority to make such a ridiculous call?) to a mock ‘NASA’ releasing an alert of an impending meteor strike on the west coast, I am encouraged to “become the villain” in order to establish myself as a “master of disinformation,” and to lose the “goody two-shoes attitude.” Literally. These are the words of the game, not mine.

Let’s bid into this and play along for a while.

Because starting a blog struck just a little too close to home, I choose (albeit resignedly) to operate a news site. Ironically named “Honest Truth Online.” Through which I am the renowned editor-in-chief.

(Read that over in a dramatic, flamboyant tone, and you will have just as much fun reading it as I did writing it.)

“Now do you see how easy it is to impersonate a credible news source?”

And with that, I had officially mastered the first of six total techniques: Impersonation.

Bad News is so clever and effective in its overarching lesson because it teaches you how to identify disinformation, as well as the manipulation tactics that make its online traction possible, in forcing you to think like the very painstakingly deceptive news sources so many of us fall victim to so frequently. It teaches you to leverage human psychology to generate higher engagement, just as they do. As I made my way through the final stages of the game, mastering the five following techniques (being emotional manipulation, polarization, conspiracy theories and discrediting), as someone who likely never would have made such an effort to think like the people and the content I try so hard to regularly avoid, I can happily say I now find myself with new insight on how to detect and eradicate misinformation in the aftermath. I think everyone would benefit from playing this game, no matter how proficient you believe your (mis)information-differentiating skills are (ever hear the saying, you’ve got to know the players to be in the game? I think it applies quite literally in this scenario).

I’m not sure why I had quite literally never even considered the possibility that such an educational tool specifically designed to prove or disprove the contents of social media posts could even exist; I had never heard of RumorGuard or Bad News prior to this week, but I can confidently say I will certainly be toying with them and other sites like them, even if propelled by nothing more than morbid curiosity. While RumorGuard is tremendously practical and informative, I loved getting to delve into the electronically curated ‘mind’ of a person who uses social media in such a manner as in Bad News, as someone who does not, as much as it made my skin crawl at times. It truly is wonderful to be aware of resources such as these, and I can imagine they will only continue to surprise me for the better in granting further exposure to and newfound comprehension of the inner workings and the cultivation of misinformation online.

Leave a comment